AVPlayer的基本使用

在iOS開發中,播放視頻通常有兩種方式,一種是使用MPMoviePlayerController(需要導入MediaPlayer.Framework),還有一種是使用AVPlayer。關於這兩個類的區別可以參考http://stackoverflow.com/questions/8146942/avplayer-and-mpmovieplayercontroller-differences,簡而言之就是MPMoviePlayerController使用更簡單,功能不如AVPlayer強大,而AVPlayer使用稍微麻煩點,不過功能更加強大。這篇博客主要介紹下AVPlayer的基本使用,由於博主也是剛剛接觸,所以有問題大家直接指出~

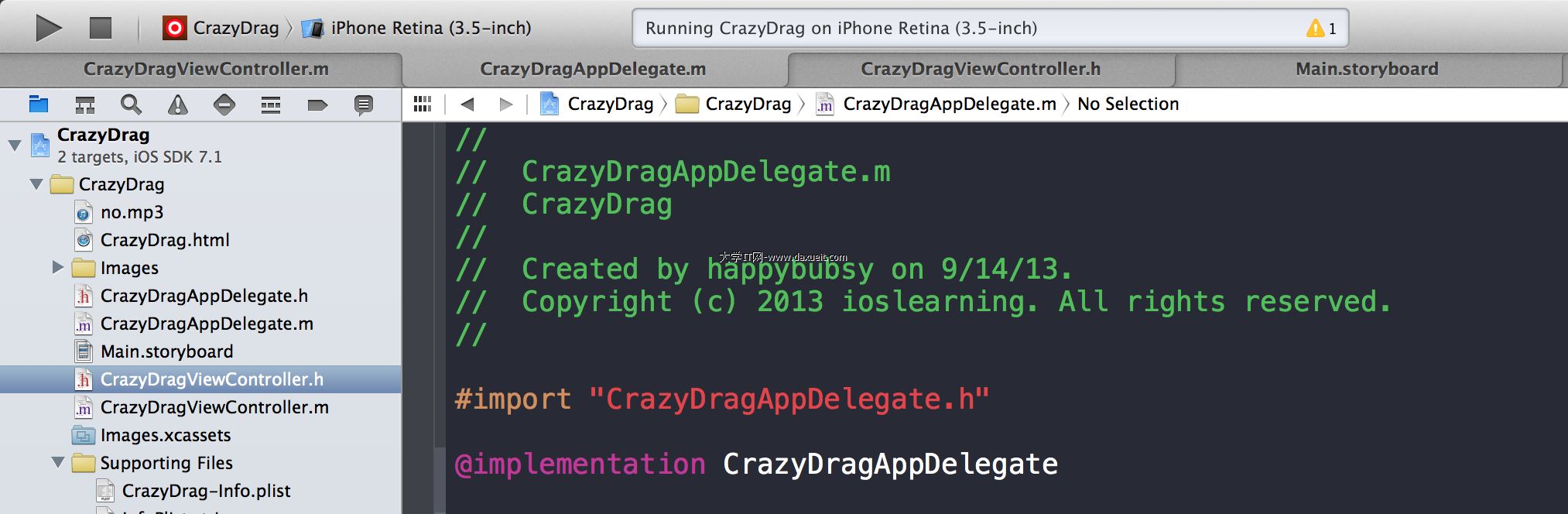

在開發中,單純使用AVPlayer類是無法顯示視頻的,要將視頻層添加至AVPlayerLayer中,這樣才能將視頻顯示出來,所以先在ViewController的@interface中添加以下屬性

@property (nonatomic ,strong) AVPlayer *player; @property (nonatomic ,strong) AVPlayerItem *playerItem;

@property (nonatomic ,weak) IBOutletPlayerView *playerView;

其中playerView繼承自UIView,不過重寫了set和get方法,用於將player添加至playerView的AVPlayerLayer中,這樣才能順利將視頻顯示出來

在PlayerView.h中聲明一個AVPlayer對象,由於默認的layer是CALayer,而AVPlayer只能添加至AVPlayerLayer中,所以我們改變一下layerClass,這樣PlayerView的默認layer就變了,之後我們可以把在viewController中初始化的AVPlayer對象賦給AVPlayerLayer的player屬性。

PlayerView.h

@property (nonatomic ,strong) AVPlayer *player;

PlayerView.m

+ (Class)layerClass {

return [AVPlayerLayer class];

}

- (AVPlayer *)player {

return [(AVPlayerLayer *)[self layer] player];

}

- (void)setPlayer:(AVPlayer *)player {

[(AVPlayerLayer *)[self layer] setPlayer:player];

}

然後在viewDidLoad中執行初始化:

NSURL *videoUrl = [NSURL URLWithString:@"http://www.jxvdy.com/file/upload/201405/05/18-24-58-42-627.mp4"]; self.playerItem = [AVPlayerItem playerItemWithURL:videoUrl]; [self.playerItem addObserver:self forKeyPath:@"status" options:NSKeyValueObservingOptionNew context:nil];// 監聽status屬性 [self.playerItem addObserver:self forKeyPath:@"loadedTimeRanges" options:NSKeyValueObservingOptionNew context:nil];// 監聽loadedTimeRanges屬性 self.player = [AVPlayer playerWithPlayerItem:self.playerItem];

[[NSNotificationCenterdefaultCenter]addObserver:selfselector:@selector(moviePlayDidEnd:) name:AVPlayerItemDidPlayToEndTimeNotificationobject:self.playerItem];

先將在線視頻鏈接存放在videoUrl中,然後初始化playerItem,playerItem是管理資源的對象(A player item manages the presentation state of an asset with which it is associated. A player item contains player item tracks—instances ofAVPlayerItemTrack—that correspond to the tracks in the asset.)

然後監聽playerItem的status和loadedTimeRange屬性,status有三種狀態:

AVPlayerStatusUnknown,

AVPlayerStatusReadyToPlay,

AVPlayerStatusFailed

當status等於AVPlayerStatusReadyToPlay時代表視頻已經可以播放了,我們就可以調用play方法播放了。

loadedTimeRange屬性代表已經緩沖的進度,監聽此屬性可以在UI中更新緩沖進度,也是很有用的一個屬性。

最後添加一個通知,用於監聽視頻是否已經播放完畢,然後實現KVO的方法:

- (void)observeValueForKeyPath:(NSString *)keyPath ofObject:(id)object change:(NSDictionary *)change context:(void *)context {

AVPlayerItem *playerItem = (AVPlayerItem *)object;

if ([keyPath isEqualToString:@"status"]) {

if ([playerItem status] == AVPlayerStatusReadyToPlay) {

NSLog(@"AVPlayerStatusReadyToPlay");

self.stateButton.enabled = YES;

CMTime duration = self.playerItem.duration;// 獲取視頻總長度

CGFloat totalSecond = playerItem.duration.value / playerItem.duration.timescale;// 轉換成秒

_totalTime = [self convertTime:totalSecond];// 轉換成播放時間

[self customVideoSlider:duration];// 自定義UISlider外觀

NSLog(@"movie total duration:%f",CMTimeGetSeconds(duration));

[self monitoringPlayback:self.playerItem];// 監聽播放狀態

} else if ([playerItem status] == AVPlayerStatusFailed) {

NSLog(@"AVPlayerStatusFailed");

}

} else if ([keyPath isEqualToString:@"loadedTimeRanges"]) {

NSTimeInterval timeInterval = [self availableDuration];// 計算緩沖進度

NSLog(@"Time Interval:%f",timeInterval);

CMTime duration = self.playerItem.duration;

CGFloat totalDuration = CMTimeGetSeconds(duration);

[self.videoProgress setProgress:timeInterval / totalDuration animated:YES];

}

}

- (NSTimeInterval)availableDuration {

NSArray *loadedTimeRanges = [[self.playerView.player currentItem] loadedTimeRanges];

CMTimeRange timeRange = [loadedTimeRanges.firstObject CMTimeRangeValue];// 獲取緩沖區域

float startSeconds = CMTimeGetSeconds(timeRange.start);

float durationSeconds = CMTimeGetSeconds(timeRange.duration);

NSTimeInterval result = startSeconds + durationSeconds;// 計算緩沖總進度

return result;

}

- (NSString *)convertTime:(CGFloat)second{

NSDate *d = [NSDate dateWithTimeIntervalSince1970:second];

NSDateFormatter *formatter = [[NSDateFormatter alloc] init];

if (second/3600 >= 1) {

[formatter setDateFormat:@"HH:mm:ss"];

} else {

[formatter setDateFormat:@"mm:ss"];

}

NSString *showtimeNew = [formatter stringFromDate:d];

return showtimeNew;

}

此方法主要對status和loadedTimeRanges屬性做出響應,status狀態變為AVPlayerStatusReadyToPlay時,說明視頻已經可以播放了,這時我們可以獲取一些視頻的信息,包含視頻長度等,把播放按鈕設備enabled,點擊就可以調用play方法播放視頻了。在AVPlayerStatusReadyToPlay的底部還有個monitoringPlayback方法:

- (void)monitoringPlayback:(AVPlayerItem *)playerItem {

self.playbackTimeObserver = [self.playerView.player addPeriodicTimeObserverForInterval:CMTimeMake(1, 1) queue:NULL usingBlock:^(CMTime time) {

CGFloat currentSecond = playerItem.currentTime.value/playerItem.currentTime.timescale;// 計算當前在第幾秒

[self updateVideoSlider:currentSecond];

NSString *timeString = [self convertTime:currentSecond];

self.timeLabel.text = [NSString stringWithFormat:@"%@/%@",timeString,_totalTime];

}];

}

monitoringPlayback用於監聽每秒的狀態,- (id)addPeriodicTimeObserverForInterval:(CMTime)interval queue:(dispatch_queue_t)queue usingBlock:(void (^)(CMTime time))block;此方法就是關鍵,interval參數為響應的間隔時間,這裡設為每秒都響應,queue是隊列,傳NULL代表在主線程執行。可以更新一個UI,比如進度條的當前時間等。

作為播放器,除了播放,暫停等功能外。還有一個必不可少的功能,那就是顯示當前播放進度,還有緩沖的區域,我的思路是這樣,用UIProgressView顯示緩沖的可播放區域,用UISlider顯示當前正在播放的進度,當然這裡要對UISlider做一些自定義,代碼如下:

- (void)customVideoSlider:(CMTime)duration {

self.videoSlider.maximumValue = CMTimeGetSeconds(duration);

UIGraphicsBeginImageContextWithOptions((CGSize){ 1, 1 }, NO, 0.0f);

UIImage *transparentImage = UIGraphicsGetImageFromCurrentImageContext();

UIGraphicsEndImageContext();

[self.videoSlider setMinimumTrackImage:transparentImage forState:UIControlStateNormal];

[self.videoSlider setMaximumTrackImage:transparentImage forState:UIControlStateNormal];

}

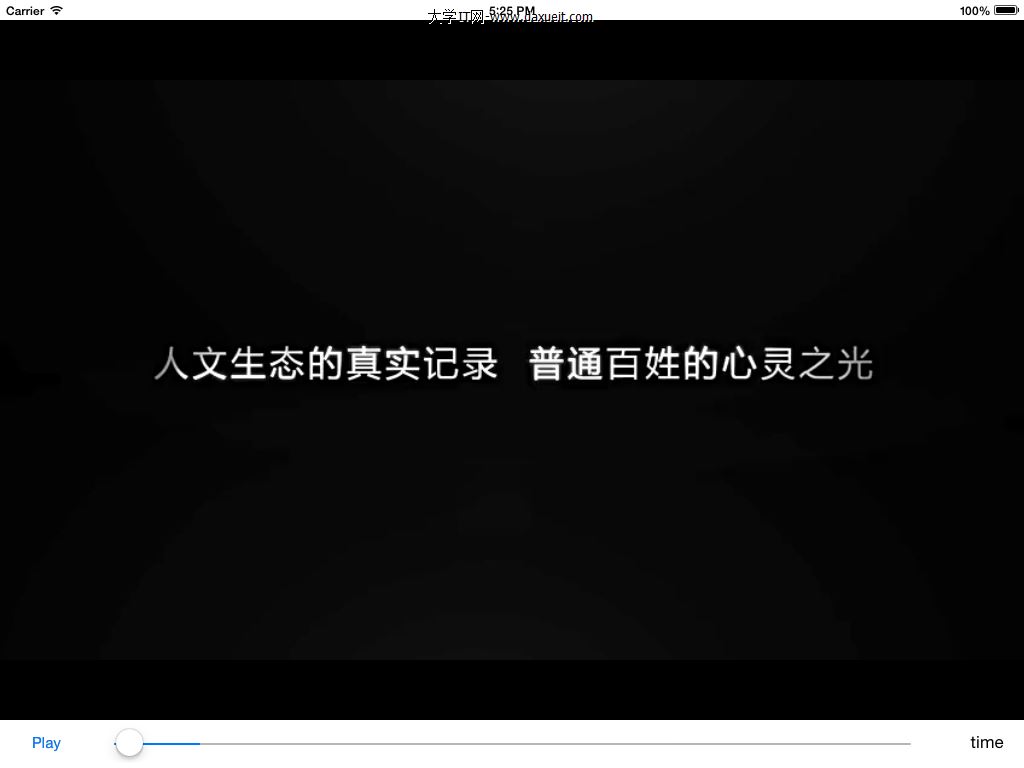

這樣UISlider就只有中間的ThumbImage了,而ThumbImage左右的顏色都變成透明的了,僅僅是用於顯示當前的播放時間。UIProgressView則用於顯示當前緩沖的區域,不做任何自定義的修改,在StoryBoard看起來是這樣的:

把UISlider添加至UIProgressView上面,運行起來的效果就變成了這樣:

這樣基本的緩沖功能就做好了,當然還有一些功能沒做,比如音量大小,滑動屏幕快進快退等,大家有時間可以自己做著玩兒下~ 最後的效果如下:

最後附上demo鏈接:https://github.com/mzds/AVPlayerDemo

- Objective-C 代碼與Javascript 代碼互相挪用實例

- 進修iOS自界說導航掌握器UINavigationController

- iOS 開辟中 NavigationController常常湧現的成績緣由剖析

- ios開辟navigationController pushViewController 方法屢次跳轉前往到最下層前往到指定的某一層的完成辦法

- IOS 波紋進度(waveProgress)動畫完成

- iOS法式開辟之應用PlaceholderImageView完成優雅的圖片加載後果

- iOS中navigationController 去失落配景圖片、去失落底部線條的焦點代碼

- iOS開辟之用javascript挪用oc辦法而非url

- IOS代碼筆記UIView的placeholder的後果

- iOS中的導航欄UINavigationBar與對象欄UIToolBar要點解析

- iOS中應用JSPatch框架使Objective-C與JavaScript代碼交互

- iOS中的音頻辦事和音頻AVAudioPlayer音頻播放器應用指南

- iOS運用中存儲用戶設置的plist文件的創立與讀寫教程

- iOS運用開辟中使UITextField完成placeholder屬性的辦法

- 詳解iOS App中挪用AVAudioPlayer播放音頻文件的用法