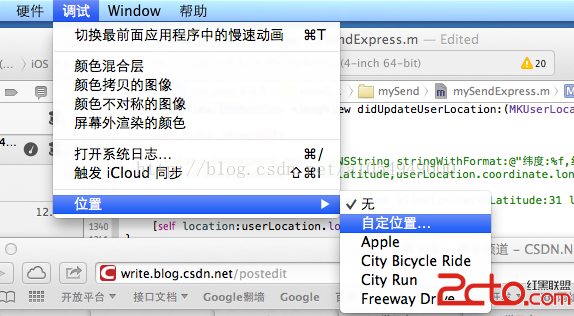

IOS 用openCv實現簡單的扣人像的

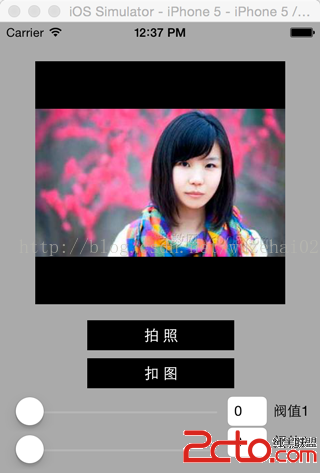

最近要實現人像扣圖的功能,我在網上查到的扣圖的方式主要有兩種,一種是coreImage 色域,一種是openCv邊緣檢測 第一種適合純色背景,扣圖精准,第二種,適合復雜背景,但是默認的扣圖不精確,如下圖 1.處理前的照片

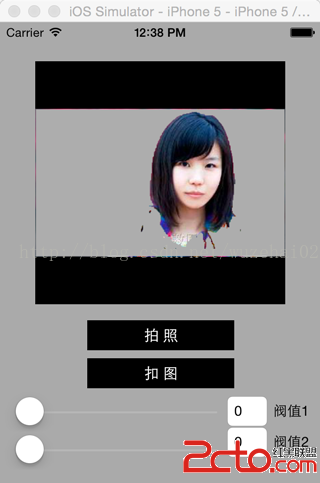

2.處理後的照片

coreImage 網上已經有很多實現了,也有很多文章,我就不多說了,我只把我實現的代碼貼出來,代碼粘過去就能用,另忘了,導入CubeMap.c

//coreImage 扣圖 createCubeMap(值1,值2)值范圍0~360 扣掉值1到值2范圍內的顏色

CubeMap myCube = createCubeMap(self.slider1.value, self.slider2.value);

NSData *myData = [[NSData alloc]initWithBytesNoCopy:myCube.data length:myCube.length freeWhenDone:true];

CIFilter *colorCubeFilter = [CIFilter filterWithName:@CIColorCube];

[colorCubeFilter setValue:[NSNumber numberWithFloat:myCube.dimension] forKey:@inputCubeDimension];

[colorCubeFilter setValue:myData forKey:@inputCubeData];

[colorCubeFilter setValue:[CIImage imageWithCGImage:_preview.image.CGImage] forKey:kCIInputImageKey];

CIImage *outputImage = colorCubeFilter.outputImage;

CIFilter *sourceOverCompositingFilter = [CIFilter filterWithName:@CISourceOverCompositing];

[sourceOverCompositingFilter setValue:outputImage forKey:kCIInputImageKey];

[sourceOverCompositingFilter setValue:[CIImage imageWithCGImage:backgroundImage.CGImage] forKey:kCIInputBackgroundImageKey];

outputImage = sourceOverCompositingFilter.outputImage;

CGImage *cgImage = [[CIContext contextWithOptions: nil]createCGImage:outputImage fromRect:outputImage.extent];

下面我講一下,ios結合openCv實現扣圖的方法

下載opencv2 具體怎麼IOS下弄opencv 請見我之前寫的一貼博客: http://blog.csdn.net/wuzehai02/article/details/8439778

IOS使用OPENCV實現物體跟蹤

頭文件導入下面幾個

#import

#import UIImage+OpenCV.h

UIImage+OpenCV類

//

// UIImage+OpenCV.h

#import

#import

@interface UIImage (UIImage_OpenCV)

+(UIImage *)imageWithCVMat:(constcv::Mat&)cvMat;

-(id)initWithCVMat:(constcv::Mat&)cvMat;

@property(nonatomic,readonly) cv::Mat CVMat;

@property(nonatomic,readonly) cv::Mat CVGrayscaleMat;

@end

//

// UIImage+OpenCV.mm

#import UIImage+OpenCV.h

staticvoid ProviderReleaseDataNOP(void *info,const void *data,size_t size)

{

// Do not release memory

return;

}

@implementation UIImage (UIImage_OpenCV)

-(cv::Mat)CVMat

{

CGColorSpaceRef colorSpace =CGImageGetColorSpace(self.CGImage);

CGFloat cols =self.size.width;

CGFloat rows =self.size.height;

cv::Mat cvMat(rows, cols,CV_8UC4); // 8 bits per component, 4 channels

CGContextRef contextRef = CGBitmapContextCreate(cvMat.data, // Pointer to backing data

cols, // Width of bitmap

rows, // Height of bitmap

8, // Bits per component

cvMat.step[0], // Bytes per row

colorSpace, // Colorspace

kCGImageAlphaNoneSkipLast |

kCGBitmapByteOrderDefault); // Bitmap info flags

CGContextDrawImage(contextRef, CGRectMake(0, 0, cols, rows), self.CGImage);

CGContextRelease(contextRef);

return cvMat;

}

-(cv::Mat)CVGrayscaleMat

{

CGColorSpaceRef colorSpace =CGColorSpaceCreateDeviceGray();

CGFloat cols =self.size.width;

CGFloat rows =self.size.height;

cv::Mat cvMat =cv::Mat(rows, cols,CV_8UC1); // 8 bits per component, 1 channel

CGContextRef contextRef = CGBitmapContextCreate(cvMat.data, // Pointer to backing data

cols, // Width of bitmap

rows, // Height of bitmap

8, // Bits per component

cvMat.step[0], // Bytes per row

colorSpace, // Colorspace

kCGImageAlphaNone |

kCGBitmapByteOrderDefault); // Bitmap info flags

CGContextDrawImage(contextRef, CGRectMake(0, 0, cols, rows), self.CGImage);

CGContextRelease(contextRef);

CGColorSpaceRelease(colorSpace);

return cvMat;

}

+ (UIImage *)imageWithCVMat:(constcv::Mat&)cvMat

{

return [[[UIImagealloc] initWithCVMat:cvMat]autorelease];

}

- (id)initWithCVMat:(constcv::Mat&)cvMat

{

NSData *data = [NSDatadataWithBytes:cvMat.datalength:cvMat.elemSize() * cvMat.total()];

CGColorSpaceRef colorSpace;

if (cvMat.elemSize() == 1)

{

colorSpace = CGColorSpaceCreateDeviceGray();

}

else

{

colorSpace = CGColorSpaceCreateDeviceRGB();

}

CGDataProviderRef provider =CGDataProviderCreateWithCFData((CFDataRef)data);

CGImageRef imageRef = CGImageCreate(cvMat.cols, // Width

cvMat.rows, // Height

8, // Bits per component

8 * cvMat.elemSize(), // Bits per pixel

cvMat.step[0], // Bytes per row

colorSpace, // Colorspace

kCGImageAlphaNone | kCGBitmapByteOrderDefault, // Bitmap info flags

provider, // CGDataProviderRef

NULL, // Decode

false, // Should interpolate

kCGRenderingIntentDefault); // Intent

self = [selfinitWithCGImage:imageRef];

CGImageRelease(imageRef);

CGDataProviderRelease(provider);

CGColorSpaceRelease(colorSpace);

return self;

}

@end

好了,上面的都是准備工作,具體的代碼其實很簡單

cv::Mat grayFrame,_lastFrame, mask,bgModel,fgModel;

_lastFrame = [self.preview.imageCVMat];

cv::cvtColor(_lastFrame, grayFrame,cv::COLOR_RGBA2BGR);//轉換成三通道bgr

cv::Rect rectangle(1,1,grayFrame.cols-2,grayFrame.rows -2);//檢測的范圍

//分割圖像

cv::grabCut(grayFrame, mask, rectangle, bgModel, fgModel, 3,cv::GC_INIT_WITH_RECT);//openCv強大的扣圖功能

int nrow = grayFrame.rows;

int ncol = grayFrame.cols * grayFrame.channels();

for(int j=0; j

for(int i=0; i

uchar val = mask.at

if(val==cv::GC_PR_BGD){

grayFrame.at

grayFrame.at

grayFrame.at

}

}

}

cv::cvtColor(grayFrame, grayFrame,cv::COLOR_BGR2RGB); //轉換成彩色圖片

_preview.image = [[UIImagealloc] initWithCVMat:grayFrame];//顯示結果

上面的代碼測試可用,其實這裡最關鍵的代碼是使用了opencv的grabCut 圖像分割函數

grabCut函數的API說明如下:

void cv::grabCut( InputArray _img, InputOutputArray _mask, Rect rect,

InputOutputArray _bgdModel, InputOutputArray _fgdModel,

int iterCount, int mode )

/*

****參數說明:

img——待分割的源圖像,必須是8位3通道(CV_8UC3)圖像,在處理的過程中不會被修改;

mask——掩碼圖像,如果使用掩碼進行初始化,那麼mask保存初始化掩碼信息;在執行分割的時候,也可以將用戶交互所設定的前景與背景保存到mask中,然後再傳入grabCut函數;在處理結束之後,mask中會保存結果。mask只能取以下四種值:

GCD_BGD(=0),背景;

GCD_FGD(=1),前景;

GCD_PR_BGD(=2),可能的背景;

GCD_PR_FGD(=3),可能的前景。

如果沒有手工標記GCD_BGD或者GCD_FGD,那麼結果只會有GCD_PR_BGD或GCD_PR_FGD;

rect——用於限定需要進行分割的圖像范圍,只有該矩形窗口內的圖像部分才被處理;

bgdModel——背景模型,如果為null,函數內部會自動創建一個bgdModel;bgdModel必須是單通道浮點型(CV_32FC1)圖像,且行數只能為1,列數只能為13x5;

fgdModel——前景模型,如果為null,函數內部會自動創建一個fgdModel;fgdModel必須是單通道浮點型(CV_32FC1)圖像,且行數只能為1,列數只能為13x5;

iterCount——迭代次數,必須大於0;

mode——用於指示grabCut函數進行什麼操作,可選的值有:

GC_INIT_WITH_RECT(=0),用矩形窗初始化GrabCut;

GC_INIT_WITH_MASK(=1),用掩碼圖像初始化GrabCut;

GC_EVAL(=2),執行分割。

*/